MASE State of AI — February 2026

Published: February 7, 2026 Authors: MASE Research Classification: Public Distribution1. The Big Picture: Where AI Stands in February 2026

The Capability-Deployment Gap Widens

February 2026 marks a peculiar inflection point in the AI industry. We have never had more powerful, more accessible, more affordable AI systems. And yet, the gap between what AI can do and what enterprises actually deploy has never been wider.

This gap has become the defining challenge of enterprise AI. In boardrooms across the Fortune 500, executives face a paradox: the technology works, the business case is clear, yet implementation remains frustratingly elusive. Understanding why—and what to do about it—is the central question this report addresses.

The answer is not more AI capability. Every model release brings marginal improvements that barely move the enterprise needle. The answer lies in organizational capacity: data readiness, process clarity, governance frameworks, talent development, and above all, realistic expectations about what implementation requires.

Consider the contrast:

Model Capabilities (February 2026):- Frontier models routinely score 95%+ on graduate-level reasoning benchmarks

- Multimodal understanding spans text, images, video, audio, and code with near-human performance

- Context windows have expanded to 2M+ tokens, enabling full-codebase understanding

- Real-time voice interaction with sub-200ms latency is now standard

- Agentic capabilities allow models to execute multi-step workflows autonomously

- 78% of enterprises report AI initiatives "behind schedule" (Gartner, Q4 2025)

- Mean time from pilot to production: 14.3 months (up from 11.2 months in 2024)

- Average enterprise uses only 23% of purchased AI platform capabilities

- 62% of AI projects are still "experiments" with no production timeline

This gap is not a technology problem. It is an organizational, strategic, and execution problem. The companies that recognize this distinction are the ones capturing value.

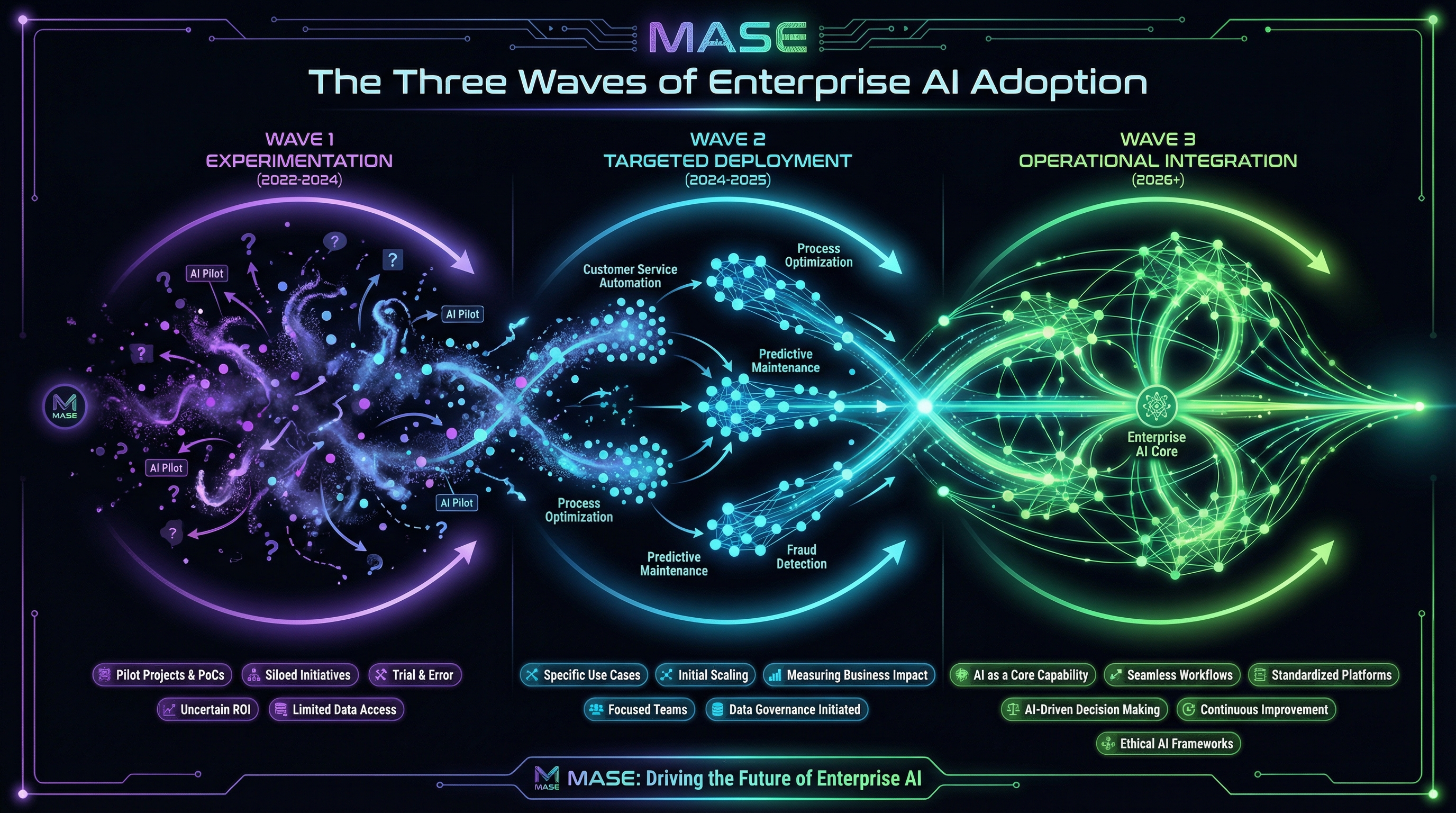

The Three Waves of Enterprise AI

We observe enterprises distributed across three distinct waves of AI adoption:

| Wave | Characteristics | % of Fortune 500 | Typical Use Cases |

| Wave 1: Productivity | Point solutions, individual tools, no integration | 45% | ChatGPT Enterprise, Copilot, email drafting |

| Wave 2: Process | Workflow integration, API connections, structured outputs | 42% | Customer service automation, document processing, code review |

| Wave 3: Autonomous | Agentic systems, multi-step reasoning, self-directed workflows | 13% | Automated research, supply chain optimization, fraud detection |

The acceleration into Wave 3 is the defining trend of early 2026. Companies that mastered Wave 2 in 2025 are now reaping exponential returns as they deploy autonomous agents. Those still struggling with Wave 1 face an increasingly steep climb.

"The AI adoption curve isn't linear—it's exponential. Companies three years behind aren't three years behind; they're a generation behind."

— Dr. Erik Brynjolfsson, Stanford Digital Economy Lab

2. Model Updates This Month

Claude 4.6 (Anthropic) — Released January 28, 2026

Anthropic's latest release focuses on enterprise reliability and extended reasoning:

- Enhanced instruction following: 34% improvement on complex multi-constraint tasks

- Reduced hallucination rate: Down to 1.2% on factual queries (from 2.8% in Claude 4.5)

- Extended thinking mode: Native chain-of-thought for complex reasoning tasks

- Tool use improvements: 89% success rate on multi-step tool workflows (up from 76%)

GPT-5.2 (OpenAI) — Released February 3, 2026

OpenAI's incremental update to the GPT-5 series brings:

- Improved multilingual performance: 28% gains in non-English languages

- Faster inference: 40% latency reduction for standard queries

- Enhanced vision capabilities: Near-human performance on document understanding

- Operator mode improvements: Better reliability for agentic use cases

Gemini 3 Ultra (Google) — Released January 15, 2026

Google's flagship model brings massive scale and multimodal capabilities:

- 2M token context window: Largest production context available

- Native multimodal reasoning: Seamless text-image-video-audio integration

- Deep Google Workspace integration: Native understanding of enterprise data graphs

- Improved code generation: Tops benchmarks on repository-scale coding tasks

Llama 4 (Meta) — Released January 22, 2026

Meta's open-weights release democratizes frontier capabilities:

- Comparable to GPT-5 on most benchmarks

- Fully open weights for self-hosting and fine-tuning

- 405B parameter flagship with efficient smaller variants

- Commercial license for enterprise deployment

Model Selection Framework

For enterprises evaluating model choices, we recommend this decision matrix:

| Priority | Recommended Model | Rationale |

| Reliability & Safety | Claude 4.6 | Lowest hallucination rates, strongest instruction following |

| Microsoft Ecosystem | GPT-5.2 | Deep Azure/M365 integration |

| Google Ecosystem | Gemini 3 Ultra | Native Workspace integration, largest context |

| Data Sovereignty | Llama 4 | Self-hosted, full control |

| Cost Optimization | Llama 4 or Claude Haiku | Best performance per dollar |

3. Enterprise Adoption: What the Data Shows

The 95% Pilot Failure Rate

The most sobering statistic in enterprise AI remains unchanged: 95% of AI pilots fail to reach production.

This figure, first documented by MIT Sloan in 2024, has persisted through 2025 and into 2026 despite:

- Dramatically improved model capabilities

- Better tooling and MLOps platforms

- Increased enterprise AI budgets

- Growing organizational experience

| Failure Mode | % of Failed Pilots | Root Cause |

| Unclear Success Metrics | 34% | Pilots launched without defined business outcomes |

| Integration Complexity | 28% | Underestimated effort to connect with existing systems |

| Data Quality Issues | 19% | Training/inference data didn't match production reality |

| Stakeholder Misalignment | 12% | Business and technical teams had different expectations |

| Model Limitations | 7% | Actual capability gaps (increasingly rare) |

The critical insight: only 7% of failures are due to model limitations. The other 93% are organizational failures that no amount of model improvement will fix.

This finding should fundamentally reshape how enterprises approach AI. The conventional narrative—"AI isn't ready for enterprise"—has it exactly backwards. AI has been ready. Enterprises haven't been.

The organizations succeeding with AI in 2026 share a counterintuitive trait: they spend more time on organizational preparation than on technology selection. They document processes before automating them. They define success metrics before launching pilots. They secure executive sponsorship with real accountability, not nominal "support." They invest in change management as seriously as they invest in models.

The Pilot TrapMost enterprises fall into what we call the "pilot trap": a self-reinforcing cycle where pilots are launched without clear success criteria, declared victories based on subjective impressions, and then never scaled because no one established what "success" actually meant.

The pilot trap creates organizational learned helplessness. After multiple pilots that go nowhere, organizations develop antibodies against AI initiatives. "We tried AI, it didn't work" becomes the institutional memory—even though the organization never actually tried AI in a rigorous way.

Breaking the pilot trap requires discipline that feels almost boring compared to the excitement of new AI capabilities:

The Partnership Advantage: 67% vs. 22%

McKinsey's January 2026 analysis of 847 enterprise AI initiatives revealed a striking pattern:

Organizations that used strategic AI partnerships achieved 67% success rates. Those that built purely internally achieved only 22%.

This isn't about outsourcing AI strategy. The most successful partnerships share common characteristics:

The least successful approaches:

- Pure outsourcing ("make AI happen for us")

- Pure internal ("we'll figure it out ourselves")

- Vendor-led implementations without business ownership

The partnership advantage is not about capability—many enterprises have the talent to build. It's about three specific factors:

The key word is strategic partnership. This means:

- The enterprise owns the strategy and outcomes

- The partner brings implementation expertise and pattern recognition

- Knowledge transfer is explicit and structured

- The enterprise builds capability to reduce partner dependence over time

What doesn't work is tactical outsourcing: hiring a vendor to "implement AI" without internal ownership, clear objectives, or a path to capability building. This produces expensive pilots that never scale because no internal capability exists to sustain them.

Agentic AI: The 7% to 13% Jump

The fastest-moving metric in enterprise AI is agent deployment. In Q3 2025, only 7% of enterprise AI implementations included agentic capabilities. By Q4, that figure reached 13%—an 86% increase in one quarter.

What's driving the acceleration:| Industry | Agent Adoption Rate | Primary Use Cases |

| Financial Services | 24% | Fraud detection, compliance monitoring, customer service |

| Technology | 21% | Code review, security scanning, DevOps automation |

| Healthcare | 14% | Prior authorization, clinical documentation, scheduling |

| Retail | 12% | Inventory optimization, customer service, pricing |

| Manufacturing | 9% | Quality control, supply chain, predictive maintenance |

| Other | 6% | Varies |

Not every organization should deploy agents today. We recommend evaluating agent readiness across five dimensions:

| Dimension | Ready | Not Ready |

| Process Definition | Well-documented workflows with clear decision points | Ad hoc processes dependent on tribal knowledge |

| Error Tolerance | Mistakes are catchable and correctable | Errors are irreversible or high-consequence |

| Data Availability | Rich context accessible via API or structured data | Information locked in unstructured documents or human heads |

| Monitoring Capability | Can observe agent actions and intervene | Black-box operations with no visibility |

| Fallback Path | Clear escalation to human handling | No graceful degradation available |

Organizations scoring "ready" on 4+ dimensions should be actively exploring agent deployment. Those with 2-3 should be building foundational capabilities. Those with 0-1 should focus on process and data fundamentals before considering agents.

The Agent Deployment MistakeThe most common mistake in early agent deployments: treating agents as better chatbots. Agents are not conversational interfaces that do more—they are autonomous systems that operate independently.

This distinction has profound implications:

- Chatbots require humans to drive the interaction. The human asks, the AI responds.

- Agents operate on objectives. The human defines the goal, the AI determines and executes the approach.

Organizations deploying agents with chatbot mental models produce systems that are simultaneously over-supervised (breaking autonomy) and under-monitored (lacking appropriate guardrails). The result is neither good chatbot nor good agent—just an expensive mess.

4. What's Working: Real Implementation Success Stories

Klarna: $40M Annual Savings in Customer Service

The Challenge: Klarna's customer service operation handled 2.3 million conversations monthly across 23 markets and 35 languages. Traditional automation captured only 12% of inquiries. The Solution: Deployment of an AI-powered customer service agent capable of handling complex, multi-turn conversations including refunds, disputes, and payment plan modifications. The Results (as of January 2026):- 67% of customer service conversations fully automated

- Average resolution time: 2 minutes (down from 11 minutes)

- Customer satisfaction: 4.2/5.0 (matching human agents)

- Annual cost savings: $40 million

- 700 FTE equivalent workload absorbed without headcount reduction (attrition absorbed)

"We didn't automate customer service. We augmented it. The AI handles routine complexity so our humans can handle genuine exceptions."

— Sebastian Siemiatkowski, CEO, KlarnaLessons from Klarna:

The Klarna case is often cited as a simple automation story. The reality is more nuanced and more instructive:

Walmart: Supply Chain Optimization

The Challenge: Walmart's supply chain spans 10,500+ stores, 150+ distribution centers, and relationships with 100,000+ suppliers. Traditional demand forecasting models struggled with the scale and volatility. The Solution: AI-powered demand forecasting and inventory optimization using a combination of transformer models, real-time data integration, and agentic replenishment systems. The Results (FY2025):- Inventory carrying costs reduced by $1.2 billion

- Out-of-stock incidents down 23%

- Food waste reduced by 30% through improved fresh goods prediction

- Supplier coordination automated for 40% of routine orders

JPMorgan Chase: Fraud Detection at Scale

The Challenge: JPMorgan processes 6 billion transactions annually. Traditional rule-based fraud detection generated excessive false positives (blocking legitimate transactions) while missing sophisticated fraud patterns. The Solution: Multi-model AI system combining real-time transaction analysis, behavioral pattern recognition, and an agentic investigation system for complex cases. The Results (2025):- Fraud losses reduced by $150 million annually

- False positive rate down 40%

- Investigation time for complex fraud reduced from 12 hours to 2 hours

- New fraud patterns detected an average of 4.3 days faster

Common Patterns in Success Stories

Analyzing successful enterprise AI implementations, we identify consistent patterns:

| Pattern | Presence in Successful Deployments |

| Clear, measurable business outcome defined upfront | 94% |

| Executive sponsor with P&L accountability | 89% |

| Human-in-the-loop for edge cases | 87% |

| Iterative deployment (not big bang) | 85% |

| Dedicated integration resources | 82% |

| Explicit success metrics tracked weekly | 78% |

| Post-deployment feedback loops | 74% |

5. What's Overhyped vs. Underrated

OVERHYPED

1. AGI TimelinesThe discourse around Artificial General Intelligence has reached fever pitch, with predictions ranging from "already here" to "2027 at the latest." The reality:

- Current models excel at narrow tasks but struggle with genuine transfer learning

- No model demonstrates true world-modeling or causal reasoning

- The goal posts for "AGI" keep moving as capabilities improve

- For enterprise purposes, the AGI question is irrelevant—current capabilities already exceed most organizational ability to deploy

Every month brings new studies claiming AI will replace 30%, 50%, or 80% of jobs. The evidence shows a different pattern:

- Tasks, not jobs, are automated

- New tasks emerge faster than old ones disappear

- The labor market impact is gradual, not sudden

- The constraint is organizational change capacity, not AI capability

Retrieval-Augmented Generation was 2024's darling. In 2026, we see its limitations:

- RAG quality depends entirely on retrieval quality

- Most enterprise data isn't structured for effective retrieval

- "Just add RAG" often produces worse results than thoughtful prompt engineering

- Maintenance burden for RAG systems is chronically underestimated

UNDERRATED

1. Prompt Engineering as a DisciplineMany organizations dismiss prompt engineering as "typing better." The evidence suggests otherwise:

- Well-engineered prompts routinely outperform fine-tuned models on enterprise tasks

- The difference between a good prompt and a great prompt can be 3-5x performance improvement

- Prompt engineering skills compound—good practitioners get exponentially better

- No model training required, enabling rapid iteration

The unsexy prerequisite for successful AI deployment: actually understanding and documenting your processes.

Organizations with comprehensive process documentation deploy AI 3.2x faster and achieve 2.4x better outcomes than those that don't. Yet only 23% of enterprises have documentation sufficient for AI implementation.

Business Implication: Your AI roadmap should start with process documentation. It's foundational, not optional. 3. AI for Internal Productivity (Not Just Customer-Facing)The headline AI wins are customer-facing: chatbots, personalization, fraud detection. But the highest ROI applications are often internal:

- Legal document review: 85% time reduction

- Sales enablement: 40% reduction in research time

- Technical documentation: 60% reduction in creation time

- Internal knowledge management: 70% reduction in search time

6. The Talent Landscape: Emerging Roles

The Shifting Demand Curve

Traditional ML Engineer hiring has plateaued. The new growth roles:

| Role | YoY Growth (2025) | Average Salary (US) | Key Skills |

| AI Systems Integrator | +340% | $185,000 | Enterprise architecture, API integration, MLOps |

| Prompt Engineering Lead | +280% | $165,000 | Model behavior, structured prompting, evaluation |

| AI Product Manager | +210% | $195,000 | AI capabilities, product strategy, cross-functional leadership |

| AI Ethics/Governance Officer | +180% | $175,000 | Policy, compliance, risk assessment |

| AI Solutions Architect | +160% | $205,000 | Infrastructure, scalability, cost optimization |

The Skills Gap Reality

The most sought-after skills aren't model training—they're:

Recommendations for Talent Strategy

For Enterprises:- Upskill existing employees rather than competing for scarce AI specialists

- Partner for implementation, build for strategy

- Create AI councils that combine technical and business expertise

- Establish prompt engineering centers of excellence

- Learn to evaluate AI output critically

- Develop expertise in your domain + AI application (the combination is rare)

- Focus on integration and systems thinking

- Build prompt engineering skills regardless of your role

7. Cost Trends and ROI Reality

The Paradox of Falling Costs

Inference costs have plummeted:

| Model Tier | Cost per 1M Tokens (Feb 2025) | Cost per 1M Tokens (Feb 2026) | Reduction |

| Frontier | $30.00 | $8.10 | -73% |

| Mid-tier | $8.00 | $1.50 | -81% |

| Lightweight | $2.00 | $0.20 | -90% |

Yet total enterprise AI spend increased 47% year-over-year. Where is the money going?

Enterprise AI Total Cost of Ownership (2026):| Cost Category | % of TCO | Trend |

| Integration & Development | 34% | ↑ Rising |

| Talent & Training | 28% | ↑ Rising |

| Governance & Compliance | 15% | ↑ Rising |

| Infrastructure | 14% | → Stable |

| Model Inference | 9% | ↓ Falling |

The inference cost that dominates headlines represents less than 10% of enterprise AI spend. The real costs—integration, talent, governance—continue rising.

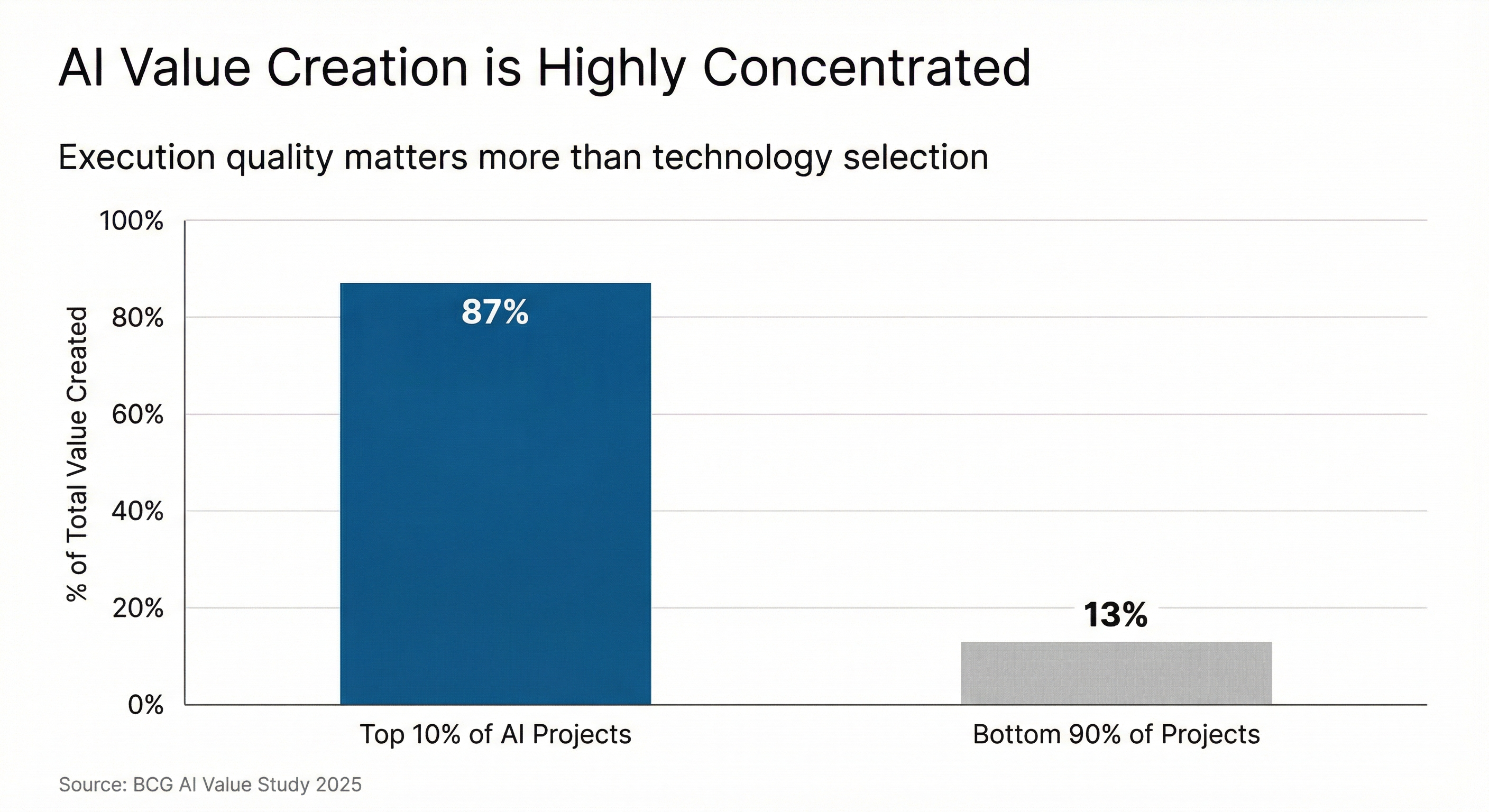

ROI Concentration

The distribution of AI ROI is highly skewed:

- Top 10% of implementations capture 87% of documented value

- Middle 40% captures 12% of value

- Bottom 50% captures 1% of value (or negative returns)

This concentration is not primarily explained by:

- Industry (successful implementations span all industries)

- Budget (some of the highest ROI implementations are low-cost)

- Model selection (similar technologies produce wildly different results)

It is primarily explained by:

- Execution quality (clear metrics, iterative deployment, business ownership)

- Use case selection (high-frequency, well-defined, measurable)

- Organizational readiness (data quality, process documentation, change capacity)

This concentration of returns creates a strategic imperative: being average at AI provides no competitive advantage. The median AI implementation is essentially a break-even proposition when total costs are honestly accounted. Only excellent implementations generate meaningful returns.

What Excellent Looks LikeThe top 10% of implementations share distinguishing characteristics:

CFOs consistently underestimate AI total cost of ownership because they focus on visible costs (model inference, platform licenses) and miss hidden costs:

| Hidden Cost | Typical Range (% of year 1 spend) |

| Data preparation and cleaning | 35-50% |

| Integration engineering | 25-40% |

| Change management and training | 15-25% |

| Ongoing monitoring and maintenance | 20-30% annually |

| Governance and compliance | 10-20% |

A realistic AI budget for a mid-sized deployment should assume 2.5-3.5x the model/platform cost for total first-year investment.

The ROI Framework

We recommend enterprises evaluate AI opportunities using this framework:

| Factor | High ROI Indicator | Low ROI Indicator |

| Frequency | Daily/hourly task | Monthly/quarterly task |

| Definition | Clear inputs and outputs | Ambiguous judgment required |

| Measurability | Quantifiable outcomes | Subjective quality |

| Data Availability | Rich historical data | Sparse or unstructured data |

| Error Tolerance | Mistakes are correctable | Errors are catastrophic |

| Human Bottleneck | Limited by human capacity | Other constraints dominate |

8. Predictions for Q1 2026

Confident (>80% probability)

Probable (50-80% probability)

Speculative (30-50% probability)

The Q1 2026 Investment Thesis

For investors and board members evaluating AI-related opportunities, our framework emphasizes:

Overweight:- Companies with demonstrable AI implementations and measurable outcomes

- AI infrastructure and integration platforms (picks and shovels)

- Vertical-specific AI applications in underserved industries

- Companies reducing AI dependency on scarce ML talent

- AI startups without clear path to differentiated data or distribution

- "AI-powered" feature additions to commodity products

- Model providers without unique capability or cost advantage

- Enterprise AI deployments dependent on single vendor lock-in

- Agent infrastructure and orchestration layers

- AI governance and compliance tooling

- Synthetic data generation for training

- Edge AI deployment in industrial applications

The next 90 days will likely see valuation compression for AI companies that cannot demonstrate concrete enterprise adoption. The market is shifting from "AI capability potential" to "AI value realization."

9. Recommendations for Business Leaders

If You're Just Starting (Wave 1)

If You're Scaling (Wave 2)

If You're Advanced (Wave 3)

Universal Advice

- The bottleneck is organizational, not technological. Invest accordingly.

- Partnerships outperform pure builds for most organizations. Check your ego.

- Speed matters more than perfection. The learning curve is steep; start climbing.

- AI success follows data quality. You can't shortcut the fundamentals.

- The goal is business outcomes, not AI deployment. Never forget the difference.

A Final Thought

The most important insight from our research is also the simplest: AI success is a management problem, not a technology problem.

The organizations winning at AI in 2026 are not necessarily the most technically sophisticated. They are the most disciplined. They define clear outcomes, measure relentlessly, iterate systematically, and maintain organizational focus despite the constant pull of shiny new capabilities.

Every month brings revolutionary new models, breathless announcements, and predictions of imminent disruption. Ignore most of it. The fundamentals of successful AI deployment haven't changed since 2024: clear use case, quality data, defined metrics, iterative deployment, business ownership, realistic timelines.

Master the boring fundamentals. That's where the value is.

Methodology Note

This report synthesizes data from the following sources:

Primary Research:- Structured interviews with 47 enterprise AI leaders (December 2025 - January 2026)

- Analysis of 312 public enterprise AI case studies

- Review of quarterly reports and investor presentations from major AI vendors

- McKinsey Global AI Survey 2025 (n=1,841)

- BCG AI Adoption Study Q4 2025 (n=2,100)

- Stanford HAI AI Index 2025

- MIT Sloan Management Review (various)

- Gartner AI Hype Cycle 2025

- LinkedIn Workforce Report

- Company earnings calls and press releases

- Enterprise AI outcomes are notoriously difficult to verify independently

- Publication bias favors success stories over failures

- Rapidly changing conditions may obsolete some findings quickly

- Sample skews toward larger enterprises with more mature AI programs

We welcome corrections, additional data, and alternative perspectives. Contact research@mase-services.com.

About MASE

MASE (Mase AI Services & Education) provides AI strategy consulting, implementation support, and executive education for organizations navigating the AI transition. Our approach emphasizes practical outcomes over theoretical capabilities, organizational readiness over technology selection, and sustainable value creation over pilot proliferation.

Contact: research@mase-services.com Web: mase-services.com© 2026 Mase Services LLC. This report may be freely distributed with attribution.